Connecting state and local government leaders

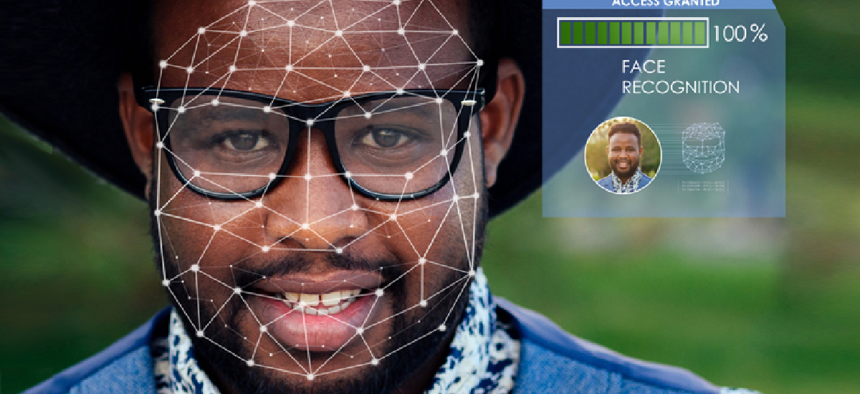

Government should step in to ensure facial recognition technology is developed without biases, experts say.

Facial recognition is not ready for prime time, according to Joy Buolamwini, a computer scientist at the MIT Media Lab. She called on the Federal Trade Commission to do more to regulate the technology.

The benchmarks used to measure the effectiveness of these systems often use images of white men, making it difficult for systems to accurately identify individuals who have darker skin tones, specifically darker-skinned women, she said, pointing to one dataset used by Facebook that was 78 percent male and 84 percent white.

If the technology can perform well on these datasets, but then struggle when presented with faces that don’t look like what it was presented with in the benchmark, this leads to inflated accuracy numbers, said Buolamwini, who is also the founder of the Algorithmic Justice League, which identifies bias in algorithms and develops practices for accountability.

“If this is the gold standard we’re using, we’re giving ourselves a false sense of progress,” she said at a Nov. 14 FTC hearing on competition and consumer protection issues associated with the use of algorithms, artificial intelligence and predictive analytics.

Buolamwini also called out one of the benchmarks used by the National Institute of Standards and Technology, the IJB-A Benchmark, which she said is 67 percent male and includes less than 5 percent darker-skinned woman.

These numbers make it “a bit more evident to me why issues I was encountering might not have surfaced in the industry or in research,” she said.

The IJB-A Benchmark is a “faces-in-the-wild” dataset made up of photos without any staging, according to Patrick Grother, a computer scientist at the NIST who administers the agency's Face Recognition Vendor Test. It was “curated without regard to demographic factors,” so it's not a surprise that its demographics are similar to those presented by many U.S. and European websites, Grother explained in an email.

“As such,” he said, “I agree that the dataset is not suited to detection of demographic differentials." The images in the IJB-A dataset are, however, accompanied by metadata on gender, skin tone and other information, which would give "developers opportunities to rebalance, or reweight certain groups including any that are under-represented,” he said.

Buolamwini worked to develop her own benchmark and tested it against facial recognition systems available from Microsoft, IBM and Face++ (a Chinese company). The results showed significant gaps in how the commercial systems handled both gender and race. The companies released new application programming interfaces after her research was published, but differences persisted, she said.

“It’s up to regulators to protect us,” she said at the hearing.

Buolamwini specifically called on NIST to publicly disclose the breakdown of “demographic and phenotypic breakdown of their existing benchmarks.”

NIST has taken steps to detect more variation among different demographics by using “much more controlled portrait-style images where extraneous factors like illumination, camera angle and resolution have little variation,” Grother said. NIST plans to talk about this work at the International Face Performance Conference later this month. It will also release its own report on how facial recognition systems handle differences in gender and skin tone in spring 2019.

It’s also important address privacy implications of the technology, especially when companies like Facebook have easy access to our faceprints, Buolamwini said. Plus, 117 million Americans may have their photos in law enforcement facial recognition databases, according to a 2016 study from Georgetown University.

“You can change your password,” she said. “You can’t necessarily change your face.”

Jennifer Wortman Vaughan, a senior researcher at Microsoft Research, acknowledged incidents of bias “highlight how important it is to get AI right” so that it does not have a negative impact on society.

“The data is really what matters here,” Vaughan said, adding that training data should reflect the community where the recognition tool will be used.

Earlier this year, Microsoft President Brad Smith called for government to regulate facial recognition technology. “The only effective way to manage the use of technology by a government is for the government proactively to manage this use itself,” Smith wrote. “And if there are concerns about how a technology will be deployed more broadly across society, the only way to regulate this broad use is for the government to do so.

NEXT STORY: A framework for secure software